Björk + Unity: The Makings of Live Mocap Press Conference

The innovative artist and musician Bjork wanted to launch her Bjork Digital exhibit in London at the end of August. The catch? At the same time, she was needed in Reykjavik. Unable to join in person, Bjork sought a solution that would emulate her despite the distance. With just seven days to go, a team of talented collaborators solved the challenge and helped the artist push the limits of real-time motion capture and explore the not-so-distant possibilities for presence and intimacy that VR promises. This is a story of how the world’s first motion captured live streamed press conference came to be.

The day before, the atmosphere was buzzing with anticipation, excitement and technical challenges, not unlike my interactive arts final show 20 years ago. We were greeted by technical producer and event mastermind Andrew Melchior showed us to our temporary back-stage lab. Drafted in from Andy Brammall’s field team, I was on tap as the resident Unity expert, along with Robin Watson and James Dinsdale of the motion capture studios Imaginarium. Bjork was due to be in the capture suite of The Icelandic Multimedia School in only a few hours, to start testing.

As Andrew recounts, the idea for this press launch was born out of a brainstorm between Bjork, the director Andrew Huang, her manager Derek Birkett, Ben Lumsden at The Imaginarium and our own Marcos Sanchez here at Unity.

The team was soon joined by studio Twisted Oak who has worked on the 2015 Bjork VR album. The Imaginarium team also previously collaborated on the motion capture of Bjork’s performance for Warren du Preez, Nick Thornton Jones amazing new piece ‘Notget’ and Andrew Huang’s work on ‘Family’. Soon, the combined efforts of these production teams started to make it look like the motion captured live streamed press conference just may be possible.

Twisted Oak's Devin Horsman and team had developed a superb Unity project, which included a Rig of Bjork's avatar, a fantastical creation, built in conjunction with the artist Andrew Thomas Huang. The plan was to bring this avatar to life as Bjork spoke with journalists in the audience.

The rigged model has over 85,000 triangles. Its 120 bones inside Unity moved in realtime in response to the motion capture data provided by Bjork from 1900 km away. The avatar uses the Unity standard shader for the materials and opacity, cloth and wind systems for the dynamic tendrils.

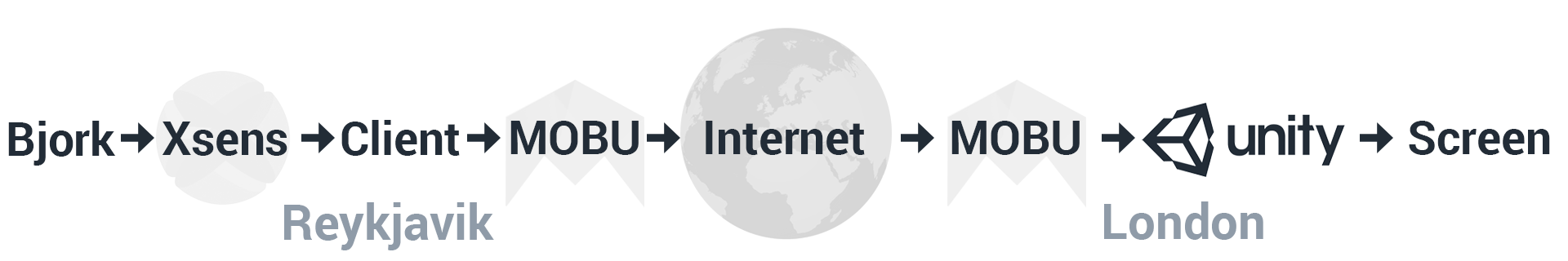

In Iceland the Xsens MVN suit passed movement from 17 tracking points via the MVN Link client which streamed locally at 240Hz into Motion Builder, the Autodesk animation tool. This in turn sent bone transform information over Internet Protocol to another Motion Builder install at Somerset house in London. The bone transforms then retargeted onto a humanoid avatar. Finally, the new bone transforms locally streamed into the Unity editor, to drive the motion of Bjork's fantastical avatar in real-time.

Audio from Bjork to be streamed over IP and relayed to us in London.

With just a few hours to go on the evening before the live event, we battled internet connections, projector alignment (in German) and fought over chairs. Still, as Gavin Williams from Imaginarium joined the team, we managed a successful test. The Unity Streaming plugin written by Robin relayed some pre-canned movement data into the Unity Avatar. It worked perfectly - Hurrah! Then Bjork arrived and suited up in Reykjavik and promptly endured our demands for over two hours with the utmost patience and cool as we ran a series of successful movement tests and not-so-successful audio tests. We remotely set up a second MoBu/Unity Node in Iceland for Bjork to receive visual feedback.

On the big day we started early to allow time for a switch in VOIP client and any last minute alterations. We received a set of changes to the project overnight and also needed to script a set of switchable cameras in Unity, to change perspective throughout the talk. We set to work merging the changes and scripting keypad input cameras before installing a totally new Audio solution. I was still talking to Bjork and directing some movement tests when the press started coming in.

...and suddenly we were live with Bjork in HD, 4 metres wide with no latency over 1900 kilometres away, in the world’s first motion captured press conference We switched cameras dynamically as Bjork relayed her experiences making her latest album and introduced the exhibition. The audience saw Bjork’s avatar look, mirror, react, just as if she were there. We punched the air in collective relief as history was made before we even knew it.

A live motion capture stream like this represents a major leap forward for Unity. You can see how in the not so distant future -- once the computing power catches up -- we’ll be able to bring this same real-time rendering application to VR, where individuals can be placed at the center of real, live performances and entertainment experiences, from concerts to speeches and sports events, via VR.

Is this article helpful for you?

Thank you for your feedback!

- Unity Labs

- Copyright © 2024 Unity Technologies